Modern CI/CD platform with Tekton CD, Flux CD and the Helm Operator

Goals

My primary goal was to embed some CI pipelines into a middleware platform, itself designed to be deployable across my company’s entities. The only requirement to run the platform is Kubernetes.

It can be a fully managed Kub' cluster (by a cloud provider) or a good-old self-managed kubeadm cluster.

Also, we needed automatic deployments for Helm releases, depending on git actions, so I implemented a GitOps-like CD pattern.

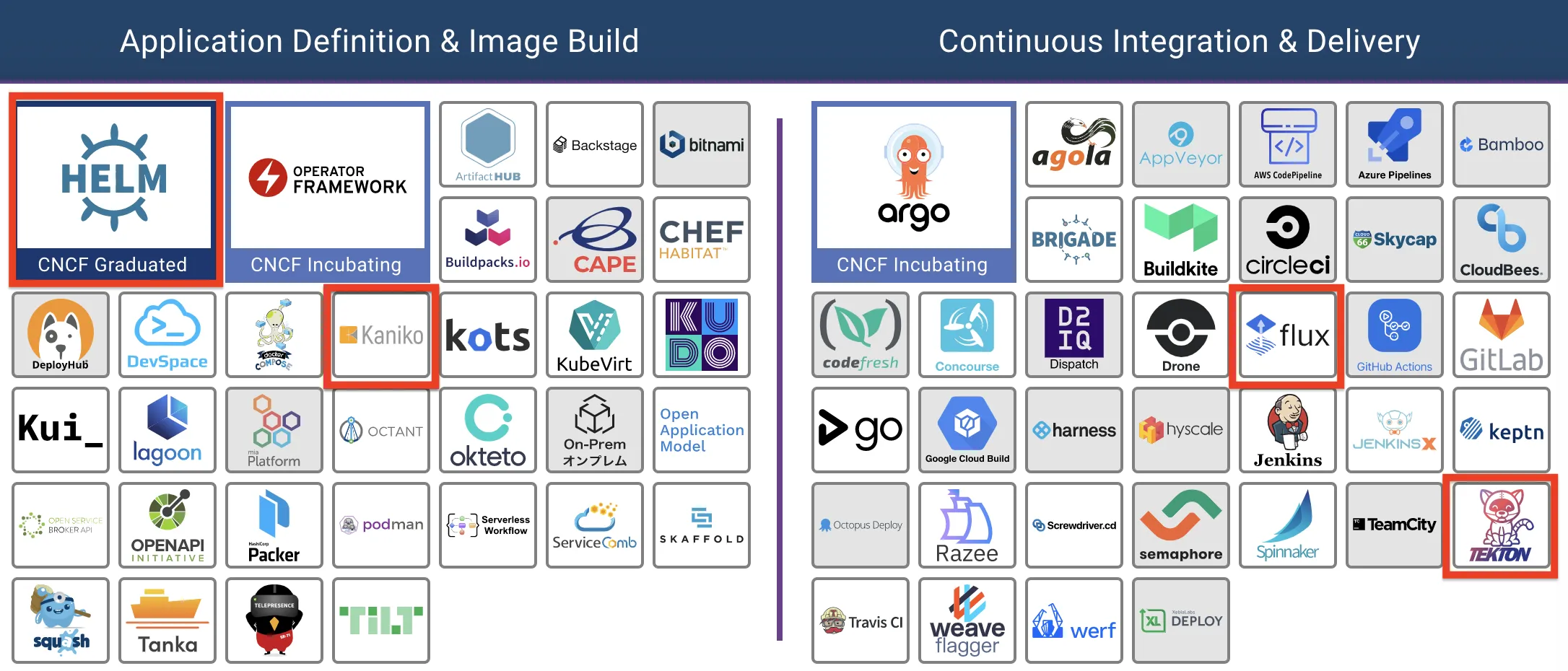

In this article, I will explain how I’ve setup this CI/CD platform and my motivations for choosing these pieces of software. Basically, they are all in th CNCF landscape, namely Tekton, Kaniko, FluxCD and the Helm Operator.

Context & Big Picture

So, I was looking for a modern, lightweight, GitOps-flavored and portable way of defining my CI/CD pipelines.

Of course, these choices are discutable but here we are :) For the 1st deployment, I’m running this stack on my company premises (self-managed kubeadm).

Why lightweight? because, in a resource constrained environment, I didn’t want the fullstack software factory like GitLab or Jenkins can offer. Also, I adhere to the unix philosophy, where every piece of software has one single purpose and do it the right way.

Finally, I wanted the best integration possible with Kubernetes and its extensible API philosophy. I really like the Operator pattern, so I was not afraid of multiplying the installation of Custom Resources Definitions (CRDs).

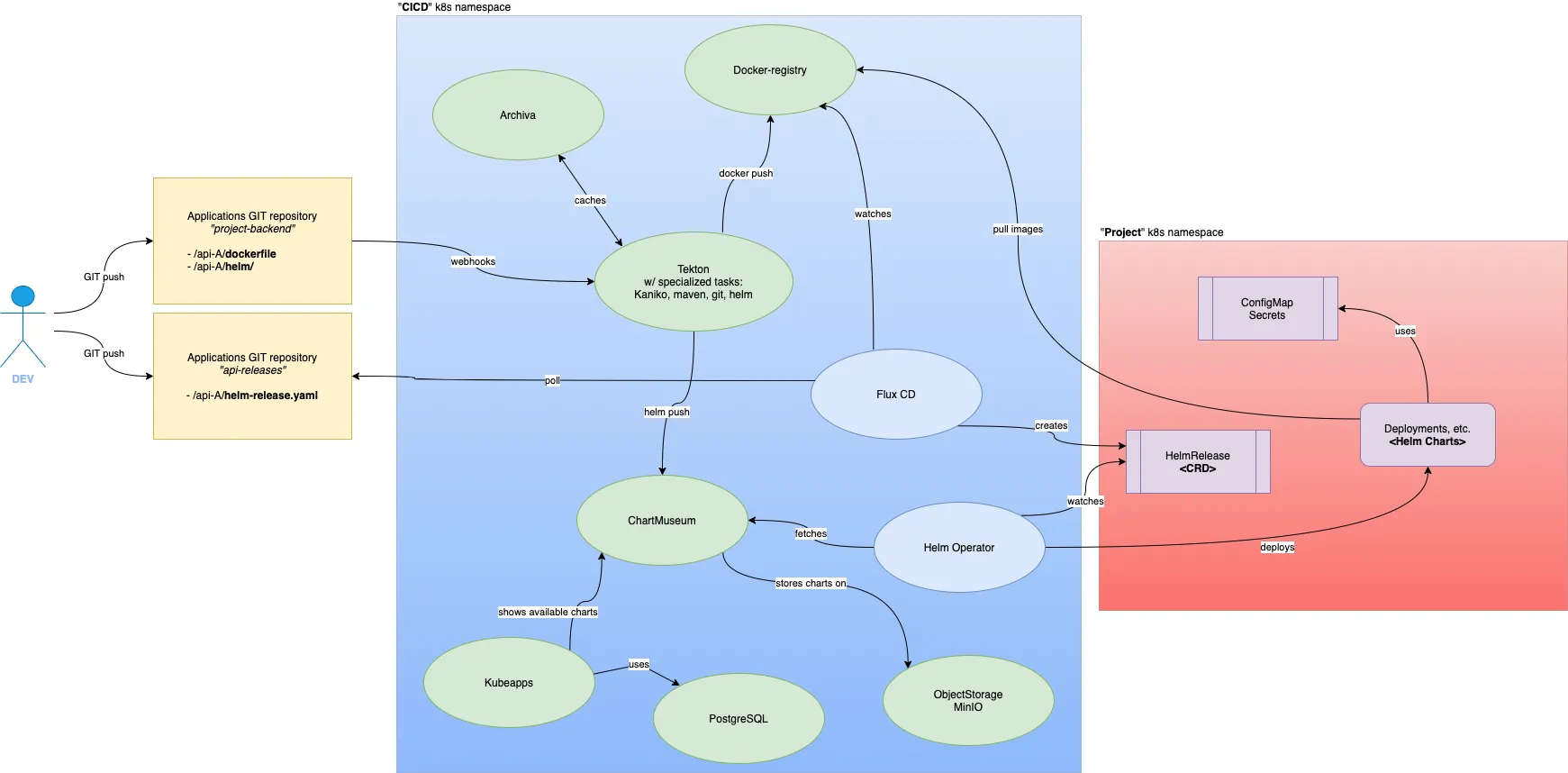

Below is the GitOps architecture I’ll speak about (click to zoom in):

Why so many?

To achieve my goals, I ended up with a lot of components. Here are some rationales.

6 of them are assets registries:

-

Docker registry, no need to explain its purpose

-

Archiva … yes! a little dusty but fit-for-purpose maven repository

-

Chartmuseum, as the Helm chart registry

-

… itself backed by Minio for chart archives storage

-

Kubeapps (optional), for web-based administration convenience

-

… itself backed by PostgreSQL

Tekton is the CI controller and makes use of build tasks, like the one based on Kaniko.

…and the last 3 are for CD purposes:

-

FluxCD, for pure GitOps

-

Helm Operator, in charge of applying Helm Custom Resources (CR)

-

Helm exporter, not represented, for Prometheus formatted metrics

More details below.

| If you don’t know what Kubeapps is, consider it like a Helm chart browser where you can synchronise multiple Helm repositories (ie. public and private). By the way, I had to modify the original Helm chart to use an existing Postgresql DB. |

Continuous Deployment

Don’t ask me why, but I began to work on the CD stack. Priority was to let developers decide when they wanted to do new versions rollout. My heart balanced a lot between ArgoCD and FluxCD and I chose the latter.

| Argo and Flux declared they have joined forces to give birth to a new GitOps product. |

So, here is the scenario when a developer wants to deploy a given version of an API, on our "middleware platform":

-

the developer updates the

HelmReleaseCR (Custom Resource) on the dedicated GIT repo -

FluxCD fetches this manifest from GIT and apply it on the Kub cluster

-

the Helm Operator watches for

HelmReleaseCR and executeshelmcommands accordingly

🎉 Power given back to devs!

Here is a example of a HelmRelease CR, for one of our API:

That’s all the information Helm needs to deploy a chart, ie. create a new "release".

Continuous Integration

This part was a little bit more tricky because I was completely underestimating the role or a CI platform. Though my needs were basic, I ended up with these many components.

In my quest for lightweight components and CR-based / declarative workflows, I stumble upon Tekton.

Tekton modules and lazy-coupled architecture

Tekton has 3 main modules:

-

Tekton Pipelines, for defining your CI/CD workflows

-

Tekton Triggers, to add some glue between pipelines and outer events (cloudevents.io compatible, common git events, knative eventing, etc.)

-

Tekton Dashboard, a web-based admin console, for rapid overview of your pipelines, logs, and more

Not to mention the very handful Tekton CLI, which will inspect Tekton CRs and quickly give you some insights about the state of your builds.

Tekton Pipelines provides kubernetes-style resources for declaring pipelines. They use containers as their building blocks.

Implementing a build pipeline with Tekton

Tekton elements are lazy-coupled: Pipelines use Tasks, themselves composed of steps. For running Pipelines or Tasks, which are meant to be reusable with different parameters, you create kind of instances of them, with PipelineRun and TaskRun.

In my case, I created those resources:

-

a single

Pipelinefor modelizing the differents tasks needed to build an API -

one

PipelineRunper API -

some more Tekton Triggers resources for bridging and mapping Git events to my Tekton platform.

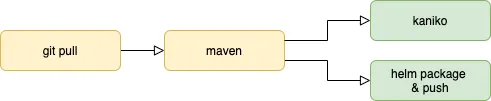

Actually, here is how steps are ordered in my API Pipeline:

Here is the Pipeline Custom Resource:

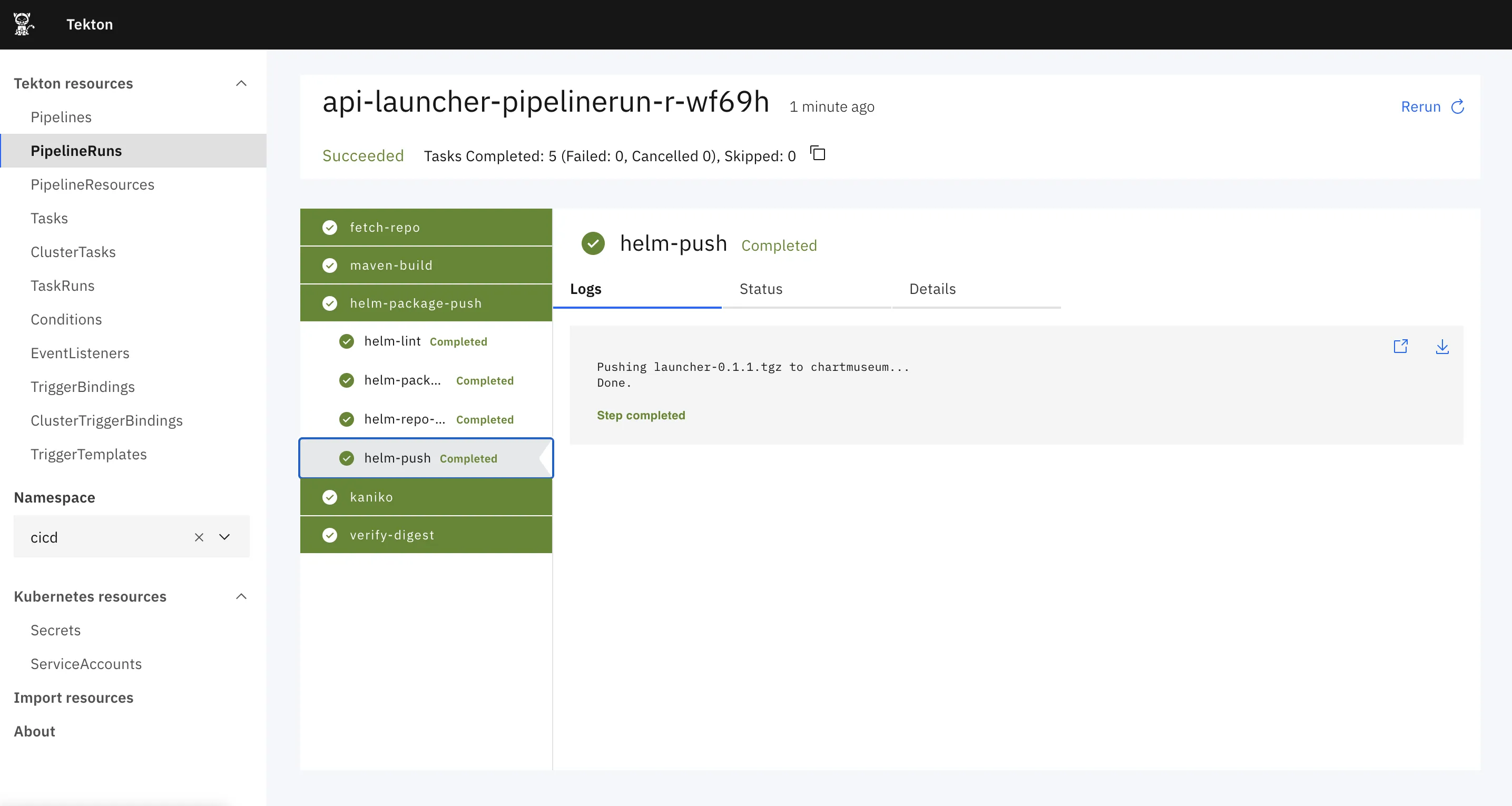

And a sample result of its execution:

| Currently, one major drawback with Tekton is you cannot use Object Storage to store and share your workspace between tasks. That means you have to use PVs. But, as I’m running Tekton on-prem, with a pseudo-dynamic PV management, I could only create a small set of PVs dedicate to build jobs. I wish I could use my Minio cluster to store these workspaces and thus increase the number of parallel builds. |

Nice to know: formerly, when you were designing a pipeline, it was advised to use the PipelineResources CR as a pass-through for standard objects like git repos or container images. The point is that this CR has not yet been migrated from v1alpha1 to v1beta1 and, in this regard, Tekton developers suggest you to migrate these PipelineResrouces to Tasks. There is a very nice list of ready-to-use Tasks on the official repo.

Webhooks with Tekton Triggers

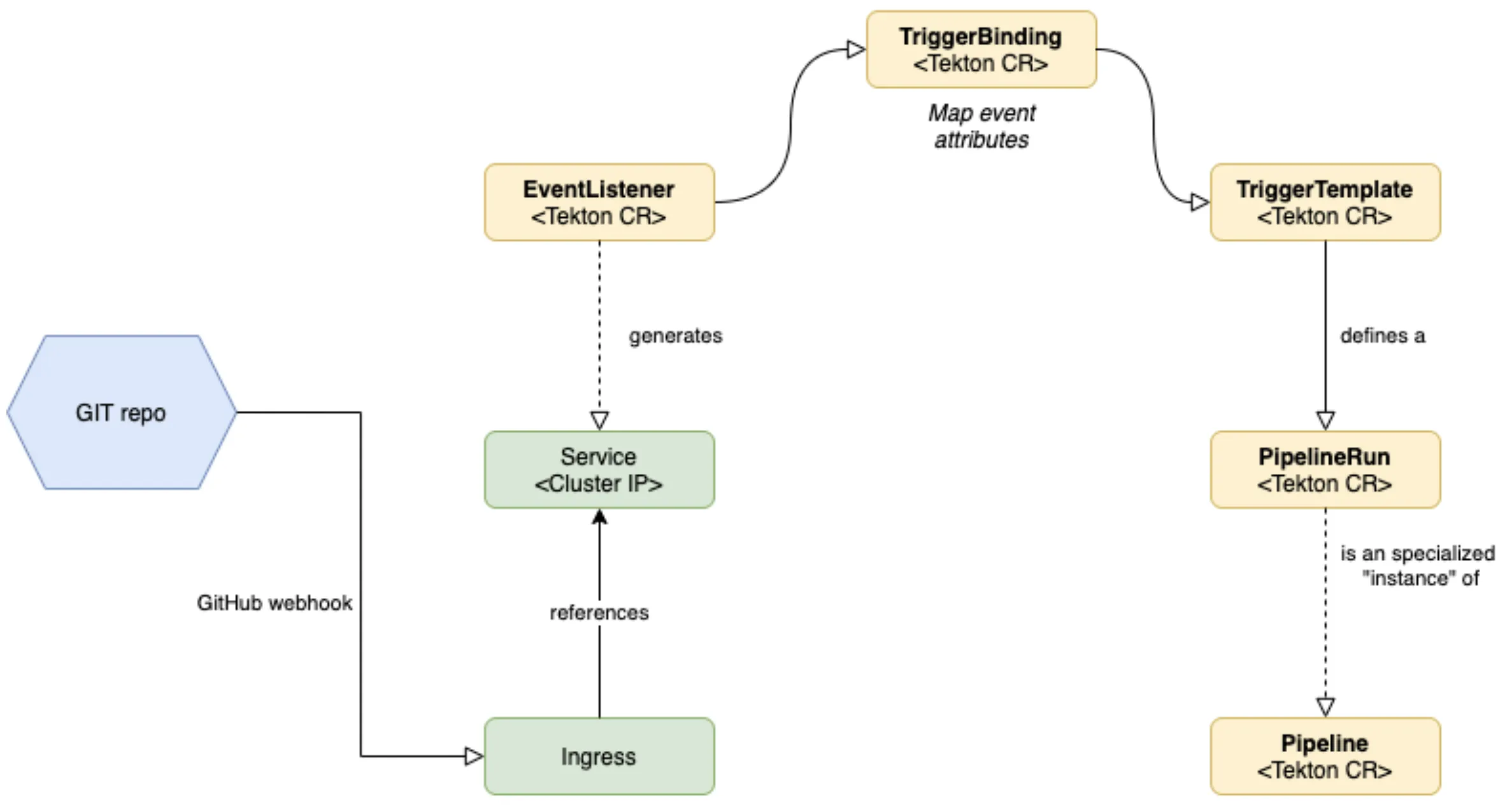

A picture is worth a thousand words…

EventListenersexpose an addressable "Sink" to which incoming events are directed. Users can declareTriggerBindingsto extract fields from events, and apply them toTriggerTemplatesin order to create Tekton resources. In addition,EventListenersallow lightweight event processing using Event Interceptors.

Below is the example of a TriggerTemplate for the API named "Launcher":

apiVersion: triggers.tekton.dev/v1alpha1

kind: TriggerTemplate

metadata:

name: api-launcher-trigger-template

namespace: cicd

spec:

params:

- default: master

description: The git revision

name: gitrevision

- description: The git repository url

name: gitrepositoryurl

- default: This is the default message

description: The message to print

name: message

- description: The Content-Type of the event

name: contenttype

resourcetemplates:

- apiVersion: tekton.dev/v1beta1

kind: PipelineRun (1)

metadata:

generateName: api-launcher-pipeline-run-

spec:

params:

- name: repo-url

value: >-

https://<ID>:<token>@github.mycompany.corp/org/r3pf-backend.git

- name: branch-name

value: bco-remove-jib

- name: image

value: >-

registry.dev.r3pf.mycompany.corp/api-launcher:$(tt.params.gitrevision) (2)

- name: apicontext

value: apis/apis-async/api-launcher/

pipelineRef:

name: api-pipeline

workspaces:

- name: shared-data

persistentVolumeClaim:

claimName: tekton-workspace

- configmap:

name: maven-config

name: maven-config

- name: maven-repo

persistentVolumeClaim:

claimName: maven-repo| 1 | The TriggerTemplate defines a PipelineRun spec. |

| 2 | you can reuse parameters from the event; here the git revision, extracted from the GitHub JSON payload. |

Conclusion

This shiny stack has some drawbacks. The learning curve is quiet steep with Tekton and you will end up by writing a lot of yaml.

A lot of these tasks would be just one-click away in any popular CI platform. Here you will have to do all by yourself.

That’s the price to pay when you want a total control of the resources used by your CI software and platform.

Regarding the CD stack, that was pretty simple to deploy and to make it work. I can only recommend the use FluxCD and the Helm Operator.

I guess, in a more complex configuration, that would make sense to use Kustomize alongside FluxCD in order to override variables depending on the environment. On my end, I managed to do it by using Flux CD + different Git branches.

Leads

If I were running a platform on a public cloud provider, I would have a deeper look at Jenkins X, which is built on top of TektonCD. When I started to work on this task, Jenkins X was mainly supporting deployments on GCP and AWS, through Terraform scripts.

Also, Kubestack might worth a look if you like Terraform and frameworks 😄

Finally, it would be great to inject secrets dynamically. The Vault Agent Injector would meet that need and is now on our roadmap.